Observability is an indispensable concept in continuous delivery, but it can be a little bewildering. Luckily for us, there are a number of tools and techniques such as CI/CD metrics that make our job easier!

Metric Monitoring

One way to aid in improving observability in a continuous delivery environment is by monitoring and analyzing key metrics from builds and deploys. With tools such as Prometheus and their integrations into CI/CD pipelines, gathering and analysis of metrics is simple. Tracking these things early on is essential. They will enable you to debug an issue or to optimize your stack.

Important metrics include:

- Bug rates

- The time taken to resolve a broken build

- Deployment-caused production downtime

- A build’s duration in comparison to past builds

Build and deployment metrics are vital to understanding what is going wrong in your development or deployment processes.

Staging Environments

One of the most powerful tricks up a developer’s sleeve is a staging environment. Testing in stage means you can be confident that your code has had a proper run-through.

However, the biggest issue brought in by running a staging environment is the effort it can take to ensure that the environment is constantly matching production. The most common method to mitigate this is to use “Infrastructure as Code”. This allows you to declare your cloud resources using code. When you need a new environment, you can run this same code. This ensures consistency between environments.

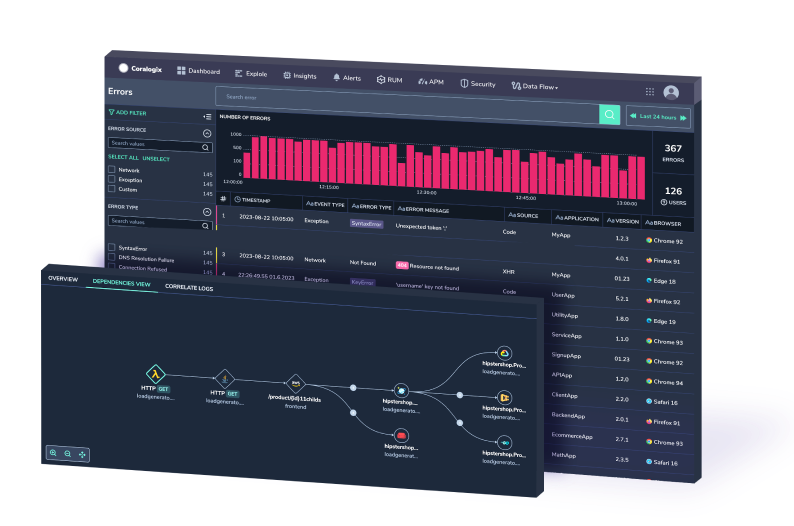

Centralized Logging

As we move into a world of containerized applications, our software components are becoming increasingly separated. This can lead to difficulty in debugging an issue. We need to put some thought into aggregating application logs from each of our services into one central location. Coralogix offers a managed ELK stack, supported by machine learning and integrations with major delivery pipelines like Jenkins.

Continuous Delivery Deployment Patterns

We can also follow patterns such as Blue/Green deployments, where we will always run 2 twinned “production” instances or instance groups, which are effectively swapped between which is production and which is staging, allowing us to be confident that both environments are always reliably configured and match each other. It’s important to ensure only environment-specific infrastructure is switched when a deployment is done, however, as you don’t want to accidentally switch to your staging database in your production environment. Patterns such as these are often easy to implement whatever your chosen provider might be, for example within AWS a Blue/Green deployment could simply the change of a Route53 record for your domain at “app.example.com” to point from one instance, load balancer, Lambda, etc., to another!

Machine Learning & Continuous Delivery

If you’re worried about all the possible issues that could crop up in your CI/CD processes, maybe you wish there was someone or something to manage it for you – and this is where machine learning can come in very handy. As a lot of issues in CI/CD follow somewhat regular patterns, ML tools such as Coralogix’s anomaly detection tools can save you time by learning your system log sequences, and using these to instantly detect any issues without requiring any human monitoring or intervention past the setup phase!

All in all

In such a fast-moving era of technology with increasing levels of abstraction and separation between application and infrastructure, it’s important for us to be able to understand exactly what our CI/CD metrics processes are doing. We need to be able to quickly monitor deployment health just as we would application health. Observability is a crucial capability for any organization, looking to move into continuous delivery.