In the age of AI, measurement becomes our superpower

The last few years have felt less like a product roadmap and more like a scene from science fiction. Artificial intelligence didn’t simply arrive, it erupted. In what feels like a blink, we’re building software by prompting instead of programming. Our words now generate code, compose music, translate languages, and create entire digital experiences. Tools like ChatGPT, Cursor, and Sora turned imagination into implementation, reducing what once took teams and months of work into something anyone can accomplish with just an idea and a browser.

But with this explosion of productivity comes a wave of new complexity. Applications are no longer written line by line by humans. They’re generated, stitched together by models that learn from millions of patterns, often creating logic we didn’t author and don’t fully control.

And when we don’t control the code, we need to control something else: the measurement.

Apps built by apps

The new reality is clear. Developers have become orchestrators. Systems are built by other systems. Huge sections of modern applications are now machine-generated or composed from opaque packages. This means you can no longer assume your team knows exactly how a system behaves just because they shipped it.

The code may run. It may even pass your tests. But is it efficient? Is it performant? Are users able to navigate it, or are they getting stuck in silent loops of frustration?

These are no longer theoretical questions. They are product risks. In this era, measurement is not just a tool for troubleshooting, it’s the only way to understand what your app is truly doing.

The role of RUM in modern engineering

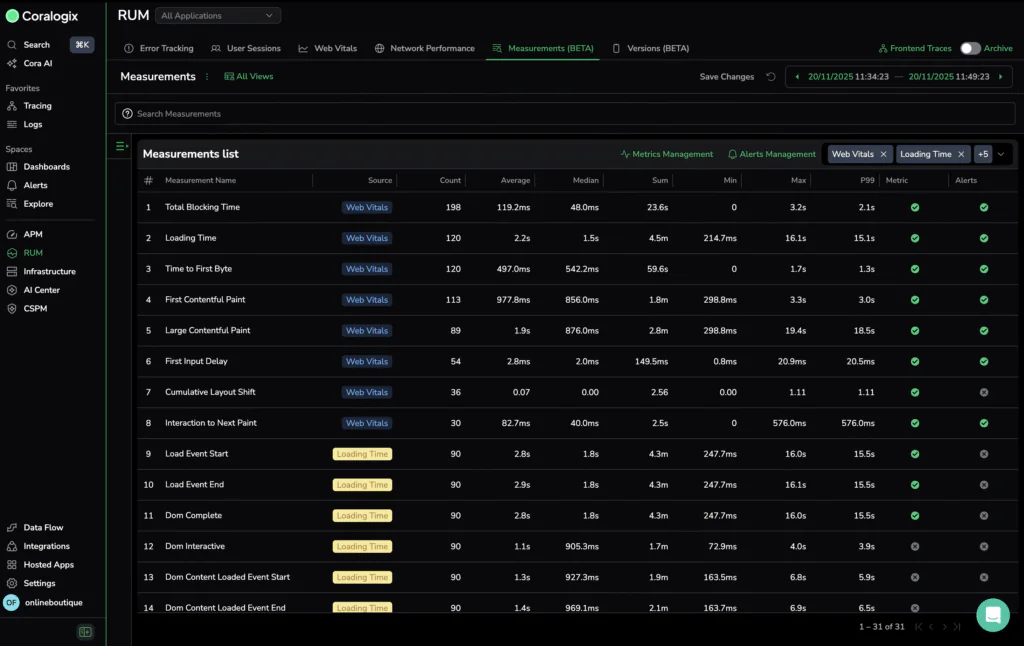

Real User Monitoring has traditionally been seen as a debugging tool. But in today’s AI-powered development landscape, it plays a much more fundamental role.

RUM doesn’t just log errors or collect timings. It surfaces real human experience. It tells you what users saw, what they clicked, how long they waited, and where they dropped off. It gives you insight into the quality of experience across real devices, real networks, and real behavior.

It’s no longer about catching crashes. It’s about accountability. If a system writes your frontend logic, it’s your job to ensure that logic leads to a positive user experience. And the only way to do that is to observe it through your users’ eyes.

When anyone can build, experience becomes the edge

The barrier to shipping an app has never been lower. Low-code tools, AI-driven frameworks, and accessible backend platforms mean anyone with curiosity and time can ship a product. But when everyone can build, differentiation gets harder.

Features and design are easy to replicate. What sets products apart is experience.

Performance, stability, and responsiveness are no longer luxuries. They are the foundation of customer trust. The fastest product wins, and the smoothest experience retains. In a landscape of clones, the only lasting advantage is quality, and that means measuring everything.

We need new metrics for a new world

Classic metrics like page load time or error count still matter, but they don’t tell the whole story. When software is co-authored by humans and machines, new questions emerge.

How long did it take the system to understand the user’s intent? How consistent are AI-generated components across user sessions? What patterns lead to churn, confusion, or frustration? We need visibility into not just what runs, but what works. Not just where something broke, but where something felt broken.

The developer’s job is changing

As code becomes commoditized, the job of the developer evolves. We are no longer just writing logic. We’re tuning systems, interpreting user behavior, and optimizing loops between code, model, and user. More of our time is spent reading metrics, observing performance, and identifying gaps in the experience.

Real User Monitoring has become the developer’s microscope. It reveals what the logs can’t: not just whether the system is functional, but whether it is usable, intuitive, and performant.

Measurement has to keep up

Releases are faster. Deployments are smaller. Features ship daily. But as velocity increases, so does the risk of introducing friction. You can’t afford to wait a week to discover that your latest release increased bounce rates by twenty percent. Observability needs to operate at the speed of development. You need answers in real time, before the next sprint ships.

RUM closes that feedback loop. It replaces assumptions with clarity and empowers teams to take ownership of experience, even when they didn’t write the code themselves.

The age of AI demands the age of responsibility

We didn’t choose this speed. But we do choose how we manage it.

Building in the era of AI means building with tools we don’t always understand. And that makes measurement more important than ever. Not just to monitor performance, but to protect users. To ensure that even as our systems become more powerful, the experience remains human.

Because when your app is shaped by a model, your best insight into how it behaves will come from watching the people who use it.

Learn more at AWS re:Invent

Come see Coralogix RUM. Visit us at booth #1739 at AWS re:Invent.