What “AI-Ready Data” actually means for observability teams

The real AI bottleneck

Many organizations deploying AI are all learning similar lessons right now: the challenge isn’t this or that AI model, it’s the data. According to Gartner, 60% of AI projects will be abandoned by organizations because of failures to support these projects with AI-ready data. Also, 63% of organizations either lack or aren’t sure they have the right data management practices to get there.

This isn’t just a challenge for data science teams training models. It applies equally to engineering organizations running AI agents and copilots against telemetry data that describes what systems are doing. Observability data is among the most valuable data any organization produces, but most observability platforms were designed for humans to query it, not for AI to consume it. The gap isn’t about data quality. It’s structural, and closing it requires rethinking the data layer itself from the start.

The shift: from asking a question to conversing with data

For two decades, observability has worked the same way. Something breaks. An engineer opens a dashboard, writes a query, scans the results, forms a hypothesis, writes another query. Repeat this until the root cause is discovered.

This model depends on a human knowing what to ask. It’s search-oriented: when it works, you get back exactly what you query for. But you are limited by those “known unknowns”, the questions you know to ask. You investigate with a spotlight.

AI agents broaden and change this mode into a floodlight. Instead of discrete searches, users can have an ongoing conversation with their telemetry data, at either or all of the operational layers – infrastructure, application, or user behavior. An SRE can ask “Why is latency increasing in Europe?” and the agent investigates, correlating across logs, metrics, traces, and security data, then surfaces a coherent answer. A product manager can ask “what’s the impact of checkout errors on conversion this week?” and get an answer that connects system behavior to business outcomes.

And critically, this operational intelligence is no longer confined to the engineers who know the tooling. When insight is delivered through conversation rather than query syntax, it becomes accessible up and down the organization, from SREs and platform teams to product, marketing, finance, security, and executive leadership.

This is the vision Coralogix was built around, and the architecture we’ve invested in for years is what makes it possible. But realizing it requires something most observability platforms can’t deliver: a data layer that’s genuinely ready for AI.

What “AI-ready” actually means for telemetry

Gartner frames AI-readiness around several requirements: data must be aligned to the specific AI use case, semantically meaningful, sourced from trusted pipelines, and transparent in its lineage. Traditional data quality standards, clean it, normalize it, remove the outliers, don’t apply. AI often needs the outliers. It needs representative data, including the messy parts.

For observability, translating these requirements into practice means your data platform needs to deliver four architectural capabilities.

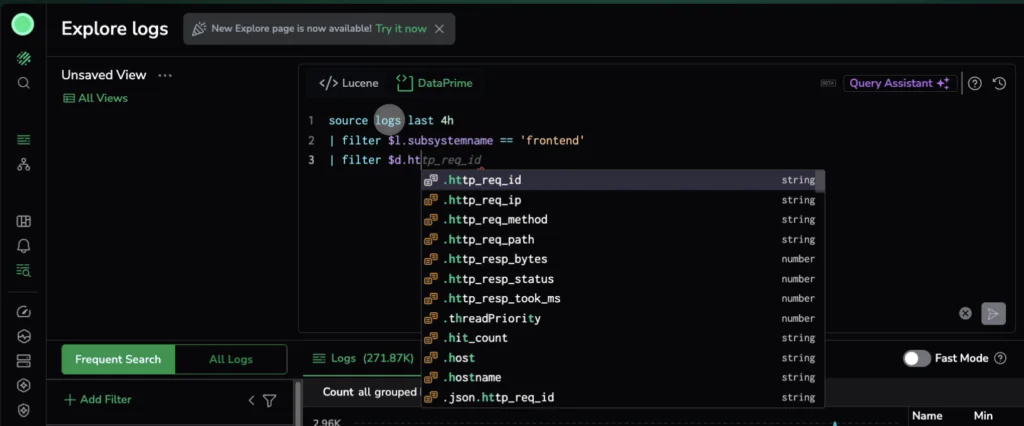

1. A query language expressive enough for AI to think in

Most observability platforms are optimized for a simple pattern: give me all logs where app = payments and severity = error. An AI agent can’t operate this way. It needs to filter, extract, join across data types, aggregate, and correlate, all in a single operation. If the query language is limited, the agent’s intelligence is capped by the expressiveness of the language it speaks.

This is why query language design matters so much in the AI era. DataPrime, the query language at the core of Coralogix, was purpose-built for this: a composable, piped syntax that lets agents (and humans) express complex analyses that read linearly and scale naturally. DataPrime is also the execution layer for Olly, Coralogix’s autonomous observability AI agent, whose specialized agents for logs, traces, metrics, security, and correlation all reason through DataPrime to construct analytical workflows that would have taken a senior SRE hours to assemble manually.

2. A living schema that evolves with your data

Telemetry doesn’t follow rigid schemas. A log field that appears at 4:00 PM might vanish by 5:00 PM. A field called duration might be a number in one service and a string in another. Traditional platforms handle this with dynamic mapping, locking in a field’s type on first appearance and breaking quietly when reality diverges.

For AI agents, this is catastrophic. An agent that doesn’t know which fields are available for a given time window will hallucinate queries or silently miss critical signals. Coralogix’s Schema Store maintains a living inventory of every field, type, and value across all ingested telemetry, scoped by time. When Olly investigates an incident, it queries the Schema Store to understand the actual shape of the data. Fewer mistakes. Faster investigations. No hallucinated fields.

3. Full-fidelity data access without the indexing tax

Here’s the economic trap: traditional observability platforms require indexing for data to be searchable. Indexing is expensive. So organizations sample aggressively, drop low-priority data, and archive everything else to cold storage that’s effectively inaccessible. AI agents end up with access to a fraction of the telemetry, and the patterns buried in the “low-value” data, where the most interesting signals hide, are invisible.

Coralogix stores telemetry in the customer’s own cloud storage in open Parquet format. The Streama engine processes data in-stream at ingestion, analyzing, classifying, and alerting in real time, while writing everything to the customer’s data lake. All data remains queryable at interactive speeds, indefinitely. No sampling. No blind spots. And because data lives in the customer’s account, there’s no vendor lock-in.

4. Governed domains that give AI the right context

An agent searching through millions of undifferentiated log lines will be slow, expensive, and noisy. What agents need is controlled context, data organized into semantic boundaries that scope investigations to the relevant domain.

Dataspaces and Datasets in Coralogix provide this: data is dynamically routed into named, governed datasets based on attributes like team, environment, or service. Each dataset has its own schema, retention policy, and access controls. AI agents scope their investigations to the relevant domain, reaching correct answers faster with less noise.

System Datasets, a new category of data that Coralogix generates automatically, takes this further by exposing platform behavior itself as queryable telemetry: who changed what, which queries ran, and how data is flowing through the system. This is the lineage and governance layer that Gartner identifies as critical, delivered as first-class data rather than an afterthought.

From asking questions to an intelligence engine

The architectural capabilities above aren’t incremental improvements. Together, these capabilities transform what an observability platform can do. Rather than a warehouse that stores telemetry and retrieves it on demand, Coralogix continuously produces operational intelligence from raw telemetry, clustering errors into patterns, pre-computing SLO trends, surfacing cross-source correlations and cascading failure patterns, automatically, without anyone writing a query.

Olly is the most visible expression of this shift: a multi-agent architecture where telemetry specialized AI reasons through DataPrime, scoped by Datasets, and informed by a Knowledge Base built from Coralogix’s platform intelligence. An engineer describes a problem in plain language, and Olly finds the root cause, connects signals to business impact, and suggests a fix.

What this means in practice

If you’re evaluating whether your observability data is ready for AI, here are the questions worth asking:

- Can an AI agent express complex analytical questions against your data?

If your query language is limited to key-value lookups, your agent’s intelligence hits a ceiling. - Does your platform track schema evolution over time?

Without a living schema, AI agents hallucinate queries against fields that don’t exist. - Is all your data queryable, or just the data you’ve indexed?

If you’re sampling or dropping data to manage costs, your AI agents have blind spots. Look for architectures that decouple query access from indexing cost. - Is your data organized into semantic domains?

Flat, undifferentiated data makes AI investigations slower and noisier. - Do you have visibility into data lineage and platform behavior? If you can’t trace how data flows through your system, you can’t trust the intelligence produced from it.

The compounding advantage

Organizations that get this right unlock a compounding cycle: more data retained means richer context for AI agents, which means better pattern recognition, which means more teams across the organization find value in operational data, driving further investment in data quality and governance that makes AI even more effective.

This is why the choice of observability platform matters more now than it ever has. It’s no longer just about dashboards and alerts. It’s about whether your data layer can power the AI-driven workflows that will define the next generation of operational excellence.

Coralogix was built on the premise that telemetry data should be processed in-stream, stored in the customer’s environment, queried without indexing, and organized into governed domains. That architecture was designed for cost efficiency and operational flexibility. It turns out it’s also exactly what AI needs.

The path from scattered breadcrumbs to root cause is no longer a manual investigation. It’s a conversation, and it starts the moment your data hits the platform.